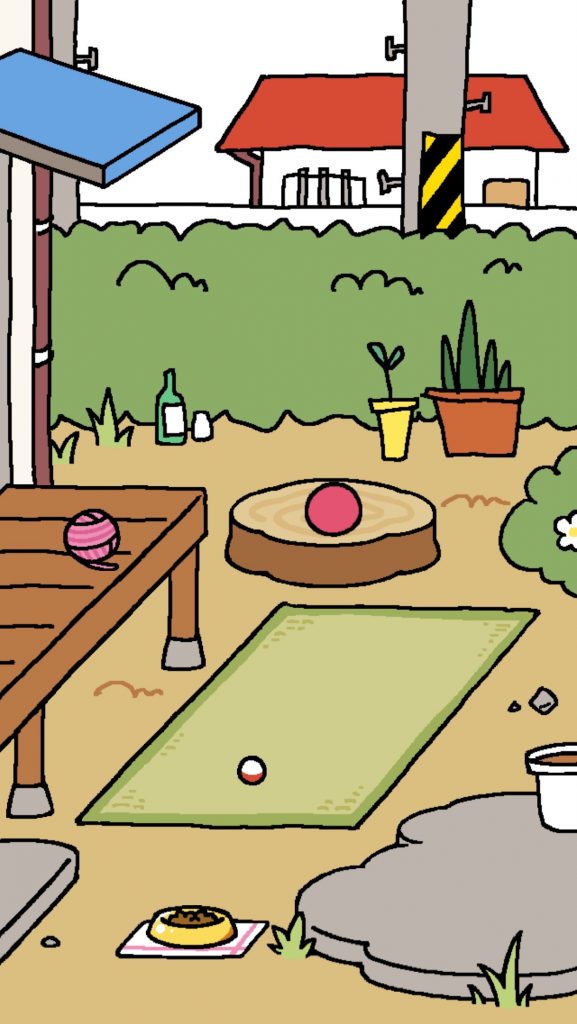

We have been discussing what we can learn about visualizations from gaming. One broad area is to look at how games use HUDs (Heads-Up Displays). Another is to look at how games use the time of the player. Perhaps the most unintuitive use of time is the postponement typical of various pet simulators and the recently popular and translated Neko Atsume: Kitty Collector game. In pet simulations like the Tamagotchi the chronotope is not the intense, fast, immersive experience of a first-person shooter, but the slow everyday rhythms and spaces of life. You carry the toy with you and feed your pet in real time. For periods you can’t do much unless you speed up the time. The play is in how you sustain play with small interventions over time. Imagine if we had visualizations that postponed gratification?